Why the Quantum Computing Hype Cycle Hasn't Crashed

Thirty years of progress and still no quantum winter

Every few years, the tech world anoints a new technology as “world-changing” (see: nanotech, graphene, virtual-reality).

Within a decade, most have faded from the headlines.

So why has quantum computing remained stubbornly lodged in the zeitgeist for the past thirty years? And are we actually any closer to unleashing its full capabilities?

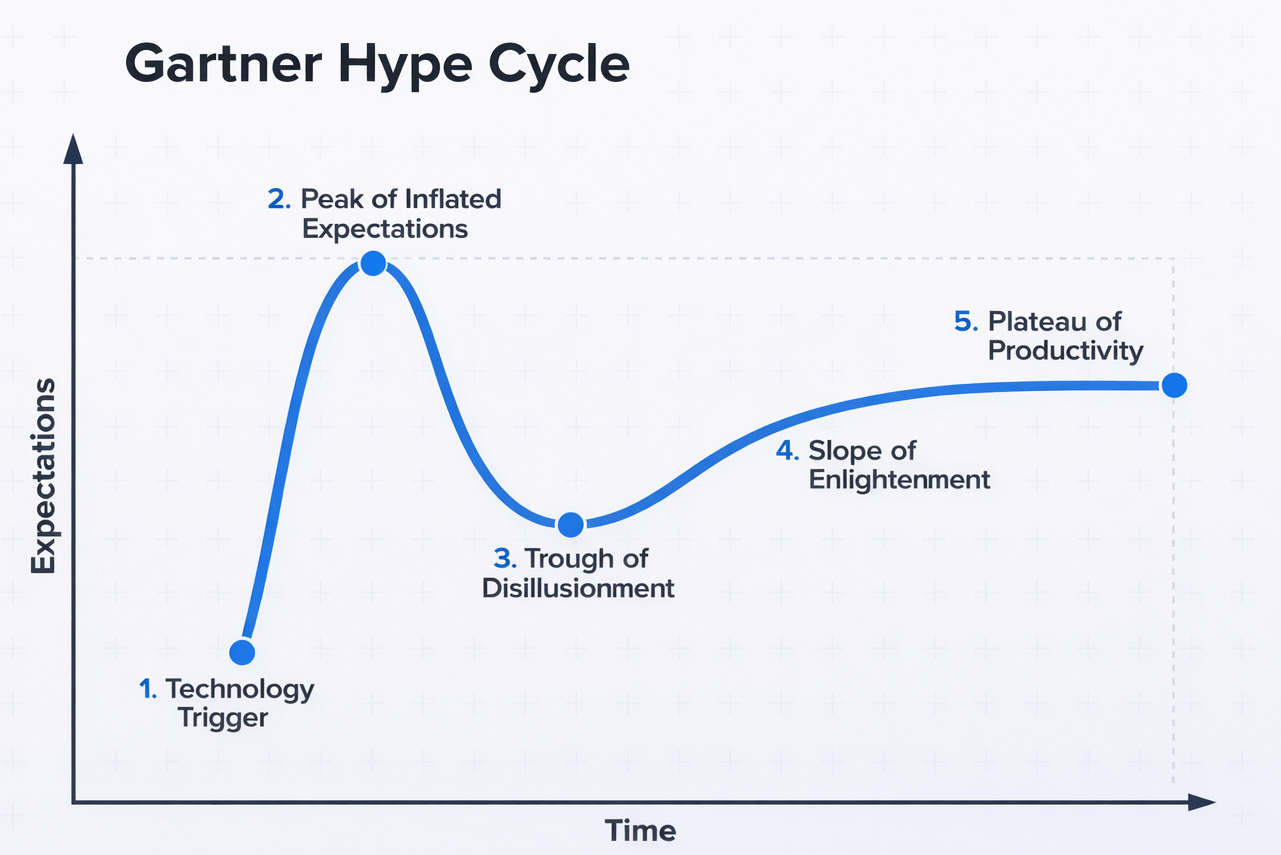

The Gartner Hype Cycle

In 1995, analyst Jackie Fenn introduced the Hype Cycle, a model describing the predictable rise and fall of excitement around new technologies:

Innovation Trigger: a breakthrough ignites media imagination and excitement about a new technology

Inflated expectations: a frenzy of publicity creates over-enthusiasm and unrealistic expectations.

Trough of Disillusionment: the technology inevitably fails to deliver quickly; interest wanes and it becomes unfashionable.

Slope of Enlightenment: survivors of the trough begin to understand the technology’s real benefits and practical uses.

Plateau of Productivity: the technology becomes widely accepted, stable, useful, and a bit boring (the suburbia of tech).

Most technologies follow this arc with surprising consistency. Quantum computing, however, seems to be behaving differently.

Less Trigger, More Trickle

Unlike technologies like graphene which were thrust into the public conciousness following a single breakthrough, the concept of “quantum computing” was built around a slow accumulation of ideas and experiments.

In 1982, Richard Feynman suggested that quantum systems could simulate physics better than classical computers. Three years later, David Deutsch expanded the idea and introduced the concept of a universal quantum computer - a fully programmable machine built around quantum bits, or qubits.

Classical computers operate on bits, which can be either 0 or 1. Quantum computers use qubits. Thanks to quantum effects like superposition and entanglement, qubits can exist in multiple states simultaneously, allowing quantum systems to explore many possible solutions at once.

At this point, no one outside of academic circles really took much interest. But that changed in 1994 with Shor’s algorithm. This mathematically proved that a sufficiently powerful quantum computer could factor large integers exponentially faster than any classical machine.

That might not sound world-changing. But, modern internet security relies heavily on RSA encryption (Rivest–Shamir–Adleman), to protect everything from bank transactions to state secrets. RSA relies on the assumption that factoring very large numbers is practically impossible for classical computers. Shor showed that the assumption wouldn’t hold in a quantum world.

Meaning that, any country with access to a powerful quantum computer could undermine the cryptographic bedrock of the digital world.

Perhaps unsurprisingly, following this discovery, governments began writing rather large cheques for quantum computing research…

Realistic Expectations

What’s unusual about quantum computing is that its world-changing implications were proven long before anything resembling a real machine existed.

And cryptography may ultimately be the least interesting part. When realised at a utility scale, it will revolutionise every area of science and engineering.

Classical computers struggle to simulate quantum systems, which means modelling complex molecules, materials, and chemical reactions quickly becomes intractable. A sufficiently powerful quantum computer could simulate these systems directly, allowing scientists to screen through molecules and materials - saving time spent pursuing dead ends in the lab. This would dramatically accelerate the discovery of new pharmaceuticals, advanced battery materials, superconductors, and catalysts for industrial processes — tangible improvements that ultimately affect the lives of everyone.

But a promise alone doesn’t sustain interest for decades. What keeps the field alive is that progress, while slow, has never stopped.

Started From the Bottom and Now We’re Where?

Since its genesis, experimentalists have been on a continuous mission to increase the scale of the quantum system they’re building.

In 2001, researchers at IBM and Stanford demonstrated Shor’s algorithm on a seven-qubit quantum computer, successfully factoring the number 15 into 3 and 5. The machine relied on nuclear magnetic resonance (NMR) qubits, where quantum information is encoded in the nuclear spins of molecules. It was an ingenious approach, but ultimately a technological dead end. Because each qubit had to correspond to a distinct nucleus within a molecule, the systems were fundamentally limited to around 10–12 qubits. So the maths was unlikely to progress beyond second grade.

Throughout the 2000s, researchers experimented with a wide range of alternative qubit technologies: superconducting circuits, trapped ions, photons, and neutral atoms. Each platform offered different trade-offs in stability, control, and scalability.

In 2019 Google announced it had achieved “quantum supremacy”. Its 53-qubit Sycamore processor, built from superconducting circuits etched onto a chip and cooled to near absolute zero, performed a specialised sampling calculation in minutes that would have taken classical supercomputers vastly longer to reproduce. They might have claimed “supremacy a little prematurely, and the claim was hotly contested (IBM argued the classical comparison had been overstated) but it was a milestone. Quantum computing suddenly looked less like an academic curiosity and more like the early stages of an industry.

Investor enthusiasm followed quickly. Between 2021 and 2022 companies like IonQ, Rigetti, and D-Wave went public through SPAC mergers, bringing experimental quantum hardware onto the stock market.

There was, however, a small problem.

Most quantum companies were generating only a few million dollars in annual revenue, largely from government contracts or research partnerships. Meanwhile, operating losses ran into tens or hundreds of millions as firms continued building experimental hardware. As interest rates rose and speculative capital retreated, many quantum stocks fell 70–90% from their peaks.

For a moment, it looked like the trough of disillusionment had arrived.

However, while the stocks dipped, the technology continued to advance: qubit counts continued to rise across multiple architectures, error-correction techniques improved, and researchers pushed closer to scalable quantum systems.

By 2024, investor enthusiasm had returned. Some publicly traded quantum firms saw their shares rise between 300% and nearly 1,900% within roughly eighteen months.

Now, in 2026, quantum computing still isn’t commercially useful, but progress remains relentless: the machines keep improving, the qubits keep multiplying, and that seems to be keeping the trough at bay.

There Won’t Be a Quantum Winter

What prompted me to think about this was a conversation I had while creating a recent video about PsiQuantum, a company developing a quantum architecture based on photonic qubits and aiming to build a utility-scale quantum computer.

The company spun out of the University of Bristol in 2016 and has spent nearly a decade pursuing an unusually ambitious strategy: machines with millions of qubits capable of solving real-world problems.

During a conversation I had with their co-founder and Chief Technologist, Mark Thompson, he mentioned something almost in passing: some people in the field had been fearing the arrival of “a quantum winter” where the world lost interest in making quantum computing a reality. So far, this has never quite materialised. On one hand, this is because of the paradigm shift a utility-scale quantum computer causes. But I also suspect that’s it because of people like Mark and the team at PsiQuantum (along with many other researchers and companies) who have spent decades consistently pushing the technology forward. Meaning that whilst Quantum is in the headlines a lot, it’s usually because an advancement has moved us one step closer to a new reality.

The Gartner model suggests every technology eventually falls out of favour.

Quantum computing, so far, seems to be avoiding it

See you next time

Ben

(If you’d like to watch my full conversation with Mark Thompson about PsiQuantum’s approach to photonic quantum computing, it’s available for members of my YouTube channel and Patreon supporters.)